Following up on our previous AV functional architecture post, today’s post provides a brief overview of typical kinds of AV sensors, along with a brief discussion of each sensor’s purpose, strengths, and limitations. This overview is intended to strike a balance between a simple infographic (if you want to stop reading now!) and a detailed technical description. For those interested in the more technical introduction, a number of links are also provided at the end to some deep-dive resources.

GPS / GNSS

The Global Positioning System (GPS) is a U.S. government owned instance of a Global Navigation Satellite System (GNSS). A GNSS uses multiple satellite signals to communicate geolocation and time to users.

GNSS is well-proven and inexpensive technology. Historically, one of its main limitations has been accuracy. The best available accuracy for high-end automotive GNSS has been 40-60 cm. However, newer triple-band GNSS receivers are now claiming accuracy less than 10 cm. The other main limitation of GNSS is sensitivity to obstructions. GNSS satellite communication is line of sight, so the signal may be blocked by buildings, tunnels, trees, mountains, etc., leading to a loss of signal even for high accuracy units.

Due to these limitations, GNSS is typically limited to synchronizing time and bootstrapping the localization function prior to applying a more accurate localization technique such as LIDAR or radar localization. However, GPS technology continues to improve, and GPS will likely remain a key component of AVs for a long time.

LIDAR

Perhaps the most controversial AV sensor, LIDAR (“Laser Imaging, Detection and Ranging” or “Light Detection and Ranging”) is used by many AV developers for both localization and perception. A few other AV developers have avoided LIDAR altogether due to its cost.

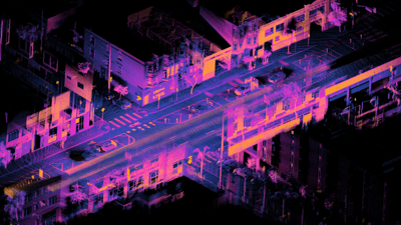

Typical high-end automotive LIDAR quickly rotates an array of 16-128 lasers and detects the returning reflections from the surrounding environment. Each “return” point represents the range and velocity of a physical object. The result is a high-definition point cloud that essentially paints a 3D picture of the environment. Figure 4 shows an example of a high-resolution LIDAR point cloud. Note that there are also non-rotating static LIDAR sensors with a limited field of view. This static LIDAR sensors are far less expensive and are beginning to gain traction in high end production vehicles (e.g. Volvo, Mercedes).

Ouster OS1-64 lidar point cloud of intersection of Folsom and Dore St, San Francisco by Daniel Lu licensed under CC BY 4.0

Advantages of LIDAR include high-resolution, accurate distance measurement, and no sensitivity to lighting conditions. Limitations include cost and sensitivity to rain and snow.

LIDAR technology is rapidly evolving, and there are many variations. A more detailed overview of current LIDAR technology by Royo et al may be found in the literature.

Cameras

Cameras are perhaps the most natural and ubiquitous AV sensors. I will omit any discussion from this post on how cameras work (check Wikipedia), but it is important to know that there may be multiple cameras of multiple types on an AV.

For conventional cameras, multiple devices are required to provide full 360° coverage around the vehicle. Cameras may vary in focal depth and field of view, including long-range, medium-range, and short-range fish-eye cameras.

Advantages of cameras are their cost, the ability to see color, and the wide array of software algorithms for computer vision. Disadvantages include the relative lack of depth information, sensitivity to lighting conditions, and sensitivity to weather.

Less conventional cameras include stereo cameras which combine a pair of (or more) cameras to produce a 3D image (for an extreme example, check out Light). Thermal imaging cameras which utilize the infrared spectrum are also beginning to see wider application.

Radar

Radar is a mature technology that uses electromagnetic waves to detect objects, including range and velocity. Modern automotive radar comes in different models for short-, medium-, and long-range sensing. A typical AV is equipped with multiple radar sensors to provide a complete field of view. These are also becoming increasingly common in ADAS systems for production vehicles.

Advantages of radar include its relative insensitivity to weather and lighting conditions, long-range detection, and low cost. The primary disadvantage is its low resolution.

High-resolution “imaging” radar for autonomous vehicles is an emerging technology that addresses the resolution weakness of radar. Another type of radar currently being researched for AV’s is ground penetrating radar (GPR). GPR is used to developed detailed maps of underground features which may be used by the AV to provide precise localization.

With improvements in radar point density, radar has an increasingly important role in AV technology, including both perception and localization. Detailed overviews of automotive radar technology may be found in the literature.

Ultrasonic

Ultrasonic sensors use high frequency sound waves to detect objects. They are well established in the automotive industry, primarily for short distance detection such as parking assist functions and pickup/dropoff obstacle detection.

Ultrasonic sensor advantages are their short-range accuracy and low cost. However, they are limited to short-range detection, and they are sensitive to material characteristics and temperature. This post gives a nice short description of the pros and cons.

IMU

An Inertial Measurement Unit (IMU) measures the velocity and heading of the vehicle using accelerometers, gyroscopes, and magnetometers.

An IMU only provides information on relative motion, so in AV applications it is typically paired with a source of absolute localization such as GPS and/or LIDAR. If the IMU estimate of absolute position is not corrected, the accuracy will degrade in a relatively short time due to accumulated error.

Other AV Sensors

A variety of other sensors may be available depending on the vehicle platform and the automated driving system. They are briefly described below:

- Wheel Encoders: Wheel speed and angle

- Vehicle Sensors: Door status, trunk status, vehicle faults, etc.

- Cabin cameras: Monitoring safety drivers and/or passengers

- Acoustic sensors: Detecting sirens and other environmental noises

- Pavement sensors: Detecting wet or icy pavement

- Vehicle-to-Vehicle: Real-time data sharing, Emergency vehicles

- Vehicle-to-Infrastructure: Traffic light status, construction information

- Sensor cleaning systems: Compressed air, liquid cleaning

Many of these other sensors could be considered “auxiliary”. The choice of whether or not to use them (and whether they are safety critical) would depend on the overall sensing strategy, discussed below.

AV Sensors Packages

An AV will typically use a set of multiple complementary sensors where the limitations of some sensors will be offset by the strengths of other sensors. Interested readers will find several more comprehensive surveys of AV sensor strategies here and here.

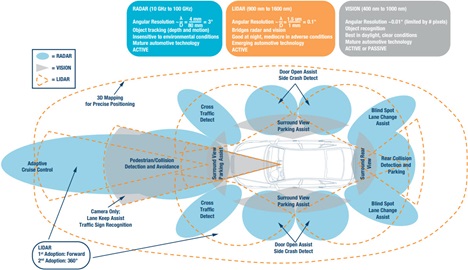

The figure below (linked from Jacobs) provides an overhead view of an AV along with the field of view of its sensor package. Note the overlapping sensors and the differing ranges of the sensors.

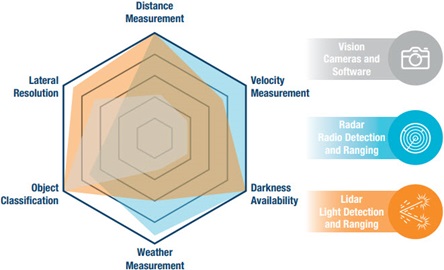

The figure below provides a visual representation of the limitations and sensitivities of the different sensor types, illustrating how the strengths and limitations of the different sensor modalities are complementary.

Further Reading

For those interested in a more technical introduction to sensors, a more detailed discussion of sensor types and sensor architectures may be found in:

- A Survey of Autonomous Driving: Common Practices and Emerging Technologies

- Overview of Autonomous Vehicle Sensors and Systems

- An Overview of Autonomous Vehicles Sensors and Their Vulnerability to Weather Conditions

Conclusion

Hopefully this overview of AV sensors struck the right balance between accessibility and detail. In our next post, we will head back to functional safety. Autonomous vehicles pose some novel challenges for traditional safety engineering methods. Up next, we will discuss these challenges through an overview of the recent safety literature.

Think AV safety is easy? Checkout our post A Review of AV Safety Challenges